Science

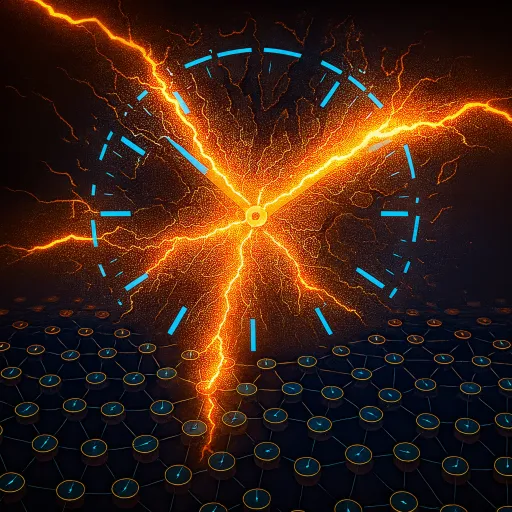

Yale Researchers Develop Scalable Neuromorphic Chips for AI

Researchers at Yale University have made significant advancements in the field of neuromorphic computing by developing a new system known as NeuroScale. This innovation allows for the creation of neuromorphic chips that better mimic brain functionality while being scalable for larger applications.

Neuromorphic chips are specialized integrated circuits designed to replicate the brain’s computational processes. While they do not function as exact replicas of human neurons, multiple chips can be interconnected to form extensive systems containing over a billion artificial neurons. Each neuron operates by transmitting information through a process called “spiking,” which enables neuromorphic systems to consume considerably less energy compared to traditional computing methods. This event-driven operation also allows them to excel in specific tasks, such as distributed computing workloads.

Despite their advantages, these chips have faced challenges regarding scalability. Typically, they depend on global synchronization protocols, which require all artificial neurons and synapses within the system to operate in unison. This reliance on a global barrier limits the chips’ performance, as the entire system’s speed is constrained by its slowest component. Additionally, the overhead of maintaining global synchronization can slow down processing across the network.

To address this issue, the team at Yale, led by Prof. Rajit Manohar, has introduced a novel approach with NeuroScale. Instead of using a single, central synchronization mechanism, NeuroScale employs a local, distributed system to synchronize clusters of neurons and synapses that are directly connected.

“Our NeuroScale uses a local, distributed mechanism to synchronize cores,” explained Congyang Li, a Ph.D. candidate and the lead author of the study. This innovative synchronization strategy enhances scalability, allowing the system to expand in accordance with the biological networks it seeks to model. The researchers noted, “Our approach is only limited by the same scaling laws that would apply to the biological network being modeled.”

Looking ahead, the Yale team plans to transition from simulation and prototyping to actual silicon implementation of the NeuroScale chip. In addition, they are exploring a hybrid model that merges the synchronization methods of NeuroScale with those found in conventional neuromorphic chips, potentially enhancing their overall performance.

This breakthrough in neuromorphic technology could pave the way for more efficient artificial intelligence systems, enabling complex computations while minimizing energy consumption. As the field of artificial neural networks continues to evolve, innovations like NeuroScale will play a crucial role in shaping the future of computing.

-

Science3 months ago

Science3 months agoNostradamus’ 2026 Predictions: Star Death and Dark Events Loom

-

Science4 months ago

Science4 months agoBreakthroughs and Challenges Await Science in 2026

-

Technology7 months ago

Technology7 months agoElectric Moto Influencer Surronster Arrested in Tijuana

-

Technology4 months ago

Technology4 months agoOpenAI to Implement Age Verification for ChatGPT by December 2025

-

Technology9 months ago

Technology9 months agoDiscover the Top 10 Calorie Counting Apps of 2025

-

Health7 months ago

Health7 months agoBella Hadid Shares Health Update After Treatment for Lyme Disease

-

Health7 months ago

Health7 months agoAnalysts Project Stronger Growth for Apple’s iPhone 17 Lineup

-

Health7 months ago

Health7 months agoJapanese Study Finds Rose Oil Can Increase Brain Gray Matter

-

Technology4 months ago

Technology4 months agoTop 10 Penny Stocks to Watch in 2026 for Strong Returns

-

Science6 months ago

Science6 months agoStarship V3 Set for 2026 Launch After Successful Final Test of Version 2

-

Technology1 month ago

Technology1 month agoNvidia GTC 2026: Major Announcements Expected for AI and Hardware

-

Education7 months ago

Education7 months agoHarvard Secures Court Victory Over Federal Funding Cuts